December 2, 2025

Correlation to Causation

Solutions to Confounding

- Recap

- Before and After

- Differences in Differences

- What is it?

- How does it work?

- Assumptions?

- Example

Recap

Solutions to Confounding

Every way of using correlation as evidence for causality makes assumptions

- FPCI cannot be solved without assumptions

- With assumptions, can say confounding/bias is not a problem

@larreth These Jobs Will Fall First As AI Takes Over 🚨 My cousin just lost his data entry job in Bloemfontein. No warning, no ceremony. The company replaced his entire team with an AI system that does all their work in just minutes. It’s the canary in the coal mine. If your job involves predictable patterns or repetitive tasks, you’re already on borrowed time. 🕰️ The pattern is clear: AI is replacing routine jobs first. But here’s the silver lining: jobs like prompt engineering didn’t even exist two years ago. The work isn’t disappearing—it’s changing. And those who adapt will thrive. The real challenge? The window to prepare is closing faster than we want to admit. Are you ready to pivot? The time to future-proof your career is NOW. #AI #ArtificialIntelligence #FutureOfWork #JobMarket #CareerChange #Automation #TechTrends #DigitalTransformation #JobLoss #AIRevolution #Innovation #FutureJobs #AdaptOrDie #Reskilling #Upskilling #TechCareers #AIImpact #WorkLife #CareerDevelopment #AIJobs #JobSecurity #AIInBusiness #AIChangesEverything #AutomationEra #CareerGrowth #TechFuture #AIAdvancements #WorkplaceTrends #AIInnovation #JobDisruption #careertips ♬ LEGACY 2 - Ogryzek

What might be some confounding variables if…

we just compared the number of people employed in jobs are at risk /not at risk to AI replacement?

- What kind of Comparison is this?

- What must we assume for this to show the effect of AI?

What might be some confounding variables if …

we just compared employment in jobs at risk of AI replacement before and after mass adoption of AI?

| Solution | How Bias Solved |

Which Bias Removed |

Assumes | Internal Validity |

External Validity |

|---|---|---|---|---|---|

| Experiment | Randomization Breaks \(W \rightarrow X\) link |

All confounding variables | 1. \(X\) is random 2. Change only \(X\) |

Highest | Lowest |

| Conditioning | Hold confounders constant |

Only variables conditioned on |

1. Condition on all confounders 2. Low measurement error 3. Cases similar in \(W\) |

Lowest | Highest |

| Before and After | Hold confounders constant |

variables unchanging over time |

No causes of \(Y\) change w/ \(X\) |

Lower | Higher |

Before and After Limitations

Example: Gun Laws

Does easing restrictions on gun laws increase murders committed using guns?

- Some states in the US require all handgun purchasers to acquire a permit-to-purchase (PTP) license.

- Only persons with a permit may purchase firearms

- In late 2007, Missouri eliminated its PTP requirement

Example: Gun Laws

But, Before and After assumes that there is nothing else about Missouri that

- changed around the same time as the PTP (gun control) repeal (\(X\))

- and affected Firearms Homicides (\(Y\))

(or more technically, assume that \(\color{red}{\text{Murders}_{MO,After}[\text{No Repeal}]} = \color{black}{\text{Murders}_{MO,Before}[\text{No Repeal}]}\))

No long-term trends, no effects on measurement, no changes in crimes \(\to\) PTP repeal

Example: Gun Laws

We want to compare the actual trend in Missouri:

\(\begin{equation}\begin{split}\text{Trend}_{MO} ={} & \color{black}{\text{Murders}_{MO,After}[\text{Repeal}]} - \\ & \color{black}{\text{Murders}_{MO,Before}[\text{No Repeal}]}\end{split}\end{equation}\)

against the counterfactual trend in Missouri:

\(\begin{equation}\begin{split}\color{red}{\text{CF Trend}_{MO}} ={} & \color{red}{\text{Murders}_{MO,After}[\text{No Repeal}]} - \\ & \color{black}{\text{Murders}_{MO,Before}[\text{No Repeal}]}\end{split}\end{equation}\)

\(\small{\begin{equation}\begin{split} = {} & \overbrace{\{\text{Murders}_{MO,After}(\text{Repeal}) - \text{Murders}_{MO,Before}(\text{No Repeal})\}}^{\text{Missouri observed trend}} - \\ & \underbrace{\{\color{red}{\text{Murders}_{MO,After}(\text{No Repeal})} - \text{Murders}_{MO,Before}(\text{No Repeal})\}}_{\color{red}{\text{Missouri counterfactual trend}}}\end{split}\end{equation}}\)

- Before and After assumes the counterfactual trend is always 0 (or continuation of linear trend)

Many possible counterfactual trends…

Which counterfactual trend is right?

Which counterfactual trend is right?

- What should the counterfactual trend be here?

Example: Gun Laws

We can’t know the counterfactual trend in Missouri…

but we can observe the trends in other states that did not change their gun purchasing laws (no change in Gun Control, \(X\)).

- We can plug in the \(\text{factual TREND}\) in an “untreated” case (no change in \(X\)) for the \(\color{red}{\text{counterfactual TREND}}\) in the “treated” case (where \(X\) did change).

Then, we can plug in

\(\small{\begin{equation}\begin{split} = {} & \overbrace{\{\text{Murders}_{MO,After}(\text{Repeal}) - \text{Murders}_{MO,Before}(\text{No Repeal})\}}^{\text{Missouri observed trend}} - \\ & \{\underbrace{\text{Murders}_{AR,After}(\text{No Repeal}) - \text{Murders}_{AR,Before}(\text{No Repeal})\}}_{\text{Arkansas observed trend}}\end{split}\end{equation}}\)

How can we apply the same idea here?

Differences in Differences

Design Based Solution:

Like before and after, differences in differences comparisons are design based:

By comparing changes over time in “treated” (\(X\) changes) and “untreated” (\(X\) does not change) cases:

- hold constant all unchanging confounding variables in both treated and untreated cases

- hold constant all similarly changing confounding variables across treated and untreated cases

Regardless of whether we have thought of those variables, whether we can measure those variables.

Design: Difference in Differences

What is it?

- Compare changes in “treated” cases before and after “treatment” to before and after changes in “untreated” cases (always two or more groups)

How does it work?

- Hold constant unchanging attributes of cases (compare same case before and after “treatment”)

- Hold constant variables that change together over time in both “treated” and “untreated” cases

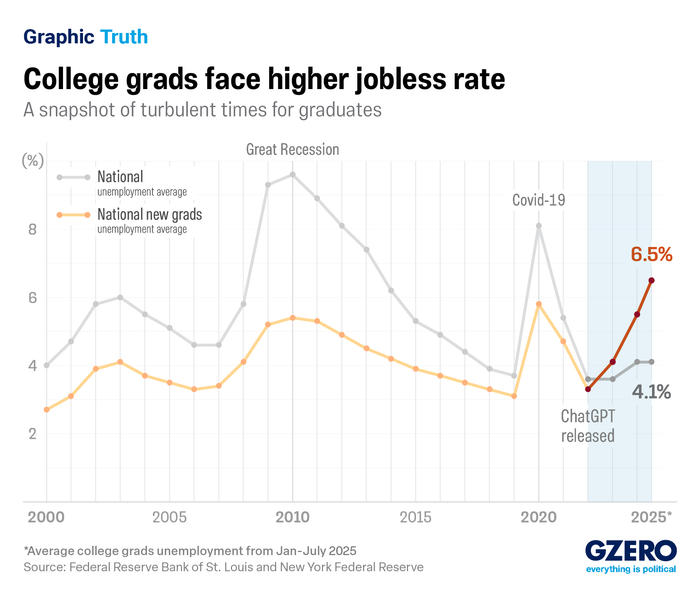

AI and Employment

- Some jobs are more or less exposed to AI replacement (\(X\))

- Compare employment (\(Y\)) in high and low AI exposure jobs before and after widespread AI adoption

- Examine differences across working-age cohorts (entry-level hires vs others)

AI and Employment

Measuring AI exposure:

- Human and LLM ratings of whether AI can perform occupational tasks more quickly (Eloundou et al 2024)

- Mapping of AI use data (from Claude) to occupational tasks (Handa et al 2025)

AI Exposure (\(X\)): degree to which job-specific tasks are replaceable with AI, by job category

- quintiles (top to bottom fifth of exposure)

AI and Employment

Measuring Employment:

- Company payroll data

- Firms recording employee pay from 2021 to 2025, job descriptions

- 3.5-5 million workers per month

Design: Difference in Differences

Why is it called difference in differences?

\(\small{\begin{equation}\begin{split} = {} & \overbrace{\{\text{Jobs}_{High \ AI,After}(\text{AI}) - \text{Jobs}_{High \ AI,Before}(\text{No AI})\}}^{\text{High AI Exposure observed trend}} - \\ & \{\underbrace{\text{Jobs}_{Low \ AI,After}(\text{No AI}) - \text{Jobs}_{Low \ AI, Before}(\text{No AI})\}}_{\text{Low AI Exposure observed trend}}\end{split}\end{equation}}\)

Design: Difference in Difference

So:

- \(\mathrm{Difference \ 1} = Employment_{After} - Employment_{Before}\) gives us trend in employment in a high vs. low AI exposed \(Occupations\)…

- holding unchanging attributes of occupations constant (difference over time)

- \(\mathrm{Difference \ 2} = \mathrm{Difference \ 1}_{High \ AI} - \mathrm{Difference \ 1}_{Low \ AI}\) gives us change in employment in \(Treated\) over time, compared to trend in \(Control\)

- holds changing attributes of both groups constant (difference in trends)

Design: Difference in Differences

Confounding Solved…

All confounding variables (affect employment, affect AI exposure) that are unchanging over time are held constant

- By comparing change over time with-in the same case

All confounding variables that change the similarly in “treated” and “untreated” cases are held constant.

- By comparing change over time in “treated” to change over time in “control”

In groups: examples of variables in the “held constant” categories?

Design: Difference in Differences

Assumption

Assumed the observed trend in \(Y\) for “untreated” cases (low AI exposure) is equal to the “counterfactual trend” in \(Y\) for the “treated” cases (High AI exposure, absent AI).

- Equivalently: “treated” and “untreated” have the “parallel trends” in \(Y\), absent change in \(X\).

- Equivalently: no variables that affect \(Y\) and change over time differently in “treated” and “untreated” cases

Should we believe assumption of “parallel trends”?

that… Counterfactual trend (without AI) in High-AI exposure jobs same as factual trend (with AI) in Low-AI exposure jobs?

In groups: examples of variables in that change differently over time in low and high-AI exposure and affect employment?

Design: Difference in Differences

When is the “parallel trends” assumption plausible?

- Do the treated and untreated cases have parallel trends before treatment?

Bad news for younger workers

No news for mid-career workers

Design: Difference in Differences

When is the “parallel trends” assumption plausible?

Do the treated and untreated cases have parallel trends before treatment?

- does not prove they would have shared trends after treatment (something else might have changed… differently in treated group)

Are we comparing cases that experience many similar changes over time?

- e.g. software development vs. home-nursing firms may experienced different economic shocks

Comparing jobs within firms (difference in jobs b/t quintile \(q\) vs quintile \(1\))

| Solution | How Bias Solved |

Which Bias Removed |

Assumes | Internal Validity |

External Validity |

|---|---|---|---|---|---|

| Experiment | Randomization Breaks \(W \rightarrow X\) link |

All confounding variables | 1. \(X\) is random 2. Change only \(X\) |

Highest | Lowest |

| Conditioning | Hold confounders constant |

Only variables conditioned on |

see above | Lowest | Highest |

| Before and After | Hold confounders constant |

variables unchanging over time |

No causes of \(Y\) change w/ \(X\) |

Lower | Higher |

| Diff in Diff | Hold confounders constant |

unchanging and similarly changing |

Parallel trends | Higher | Lower |

AI and Employment

Results point to:

- lower hiring for your age group

- fortunately, effects less pronounced in occupations with more university-degree holders

- no meaningful effect on wages/compensation

Application

Automation and Wages

Acemoglu and Restrepo (2022) investigate:

Has automation of work helped or hurt workers?

Automation and Wages

Data:

- Exposure to automation (\(X\)): industry-level investment in robotics and software and adoption of these technologies outside the US

- Wages (\(Y\)): Hourly Real Wages for workers.

“Cases”:

- Demographic groups defined by race, age, gender, education levels

- For each group, calculate “exposure to automation” based on the industries they work in

- For each group, calculate hourly real wages

Automation and Wages

Rather than looking at wages in industries with more automation, or change in wages in the US over time, use a difference in differences:

They compare:

Change in real wages for demographic groups with high exposure to automation between 1980 and 2016 (change in \(Y\) for group where \(X\) changes)

Change in real wages for demographic groups with low/no exposure to automation between 1980 and 2016 (change in \(Y\) for group where \(X\) does not change)

Assume that counterfactual trend in wages for workers exposed to automation SAME as factual trend in wages for workers not exposed to automation.

For groups with greater increase in automation exposure, greater decline in wages

Automation and Wages

Correlation suggestions Automation \(\xrightarrow{causes}\) declining wages

- Can’t be confounding due to unchanging differences b/t demographic groups, industries

- Can’t be confounding due to factors similarly affecting all groups (e.g. national/global changes)

For this to be the causal effect of automation, need to believe that wages for workers exposed / not exposed to automation would have been similar without automation…

No differences in wage trends before automation.

Automation and Wages

It still could be that other things that affect wages changed differently for workers exposed to automation than for those who were not.

- Read the paper to see how authors rule out alternatives (e.g. moving manufacturing jobs elsewhere due to trade competition)

“capital takes what it will in the absence of constraints and technology is a tool that can be used for good or for ill… Yes, [during the Industrial Revolution of the 19th Century] you got progress, but you also had costs that were huge and very long-lasting. A hundred years of much harsher conditions for working people, lower real wages, much worse health and living conditions, less autonomy, greater hierarchy. And the reason that we came out of it wasn’t some law of economics, but rather a grass roots social struggle in which unions, more progressive politics and, ultimately, better institutions played a key role — and a redirection of technological change away from pure automation also contributed importantly.”

- Daron Acemoglu

Luddites?

Yes… Luddites.

Yes… Luddites.